Machine learning system increases business revenue and reduces time-on-task by 80%.

Using machine learning to reduce employee stress and building a workflow, focused on error identification, meant auditors could complete audits in minutes instead of 30-45 minutes on average.

Our challenge: The closing process currently in place was archaic and built on a poorly implemented internal software system. This meant there were many unneccesary steps and shortcuts auditors used that shouldn’t be neccessary to complete the job.

We addressed this by auditing the process through job shadow interviews and then modeling only the necessary interactions based on what we learned.

We then built a proof of concept to test with auditors to confirm we caught all the neccessary steps.

Our hypothesis: If we use machine learning to pre-process files to find possible defects, we can reduce the time auditors spend reviewing documents by enabling them to only review potentially incorrect/missing data.

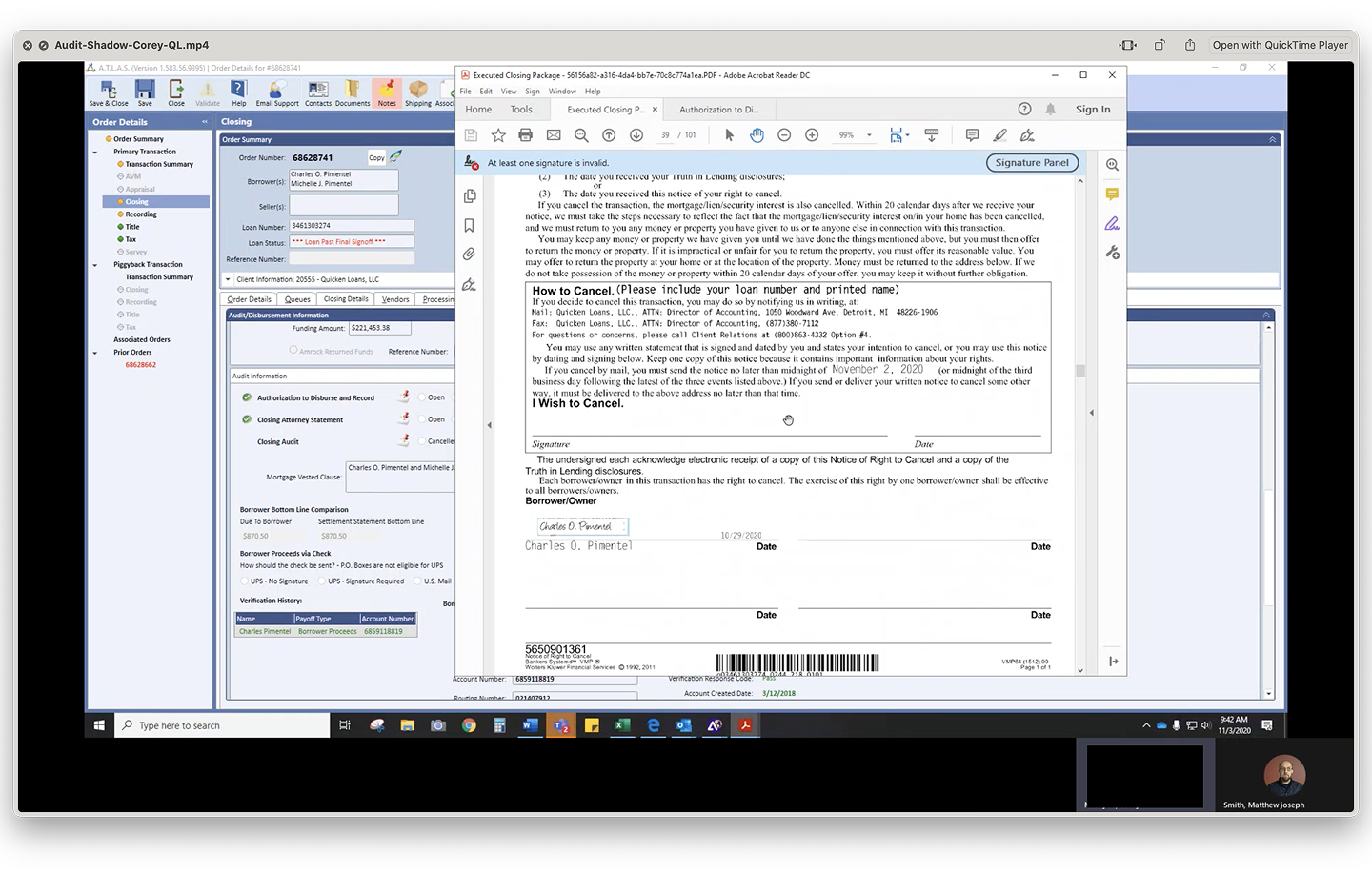

To make sure we fully understood the way people performed their jobs, and what shortcuts they may have invented, we shadowed volunteers while they performed a number of audits.

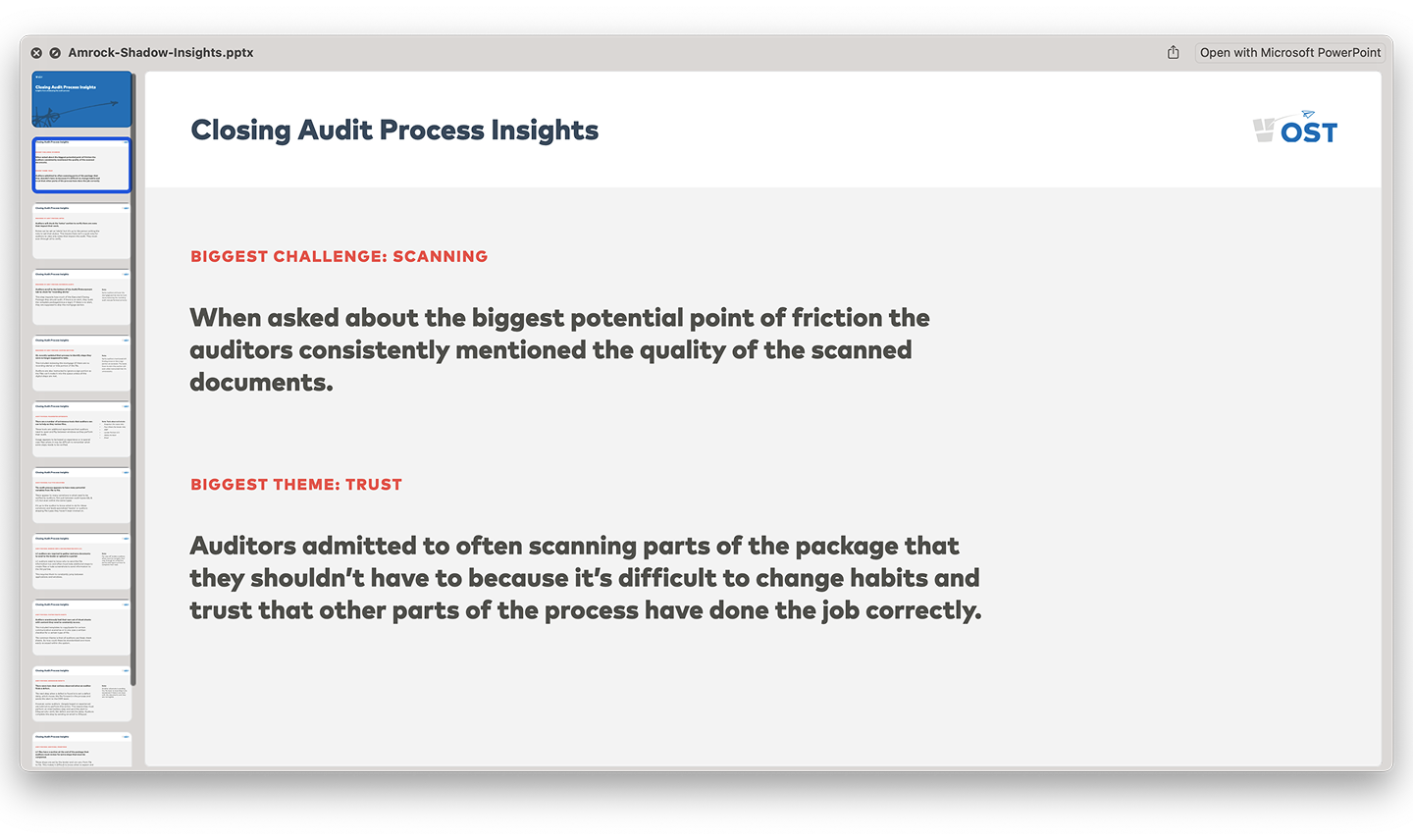

After interviewing a selection of auditors we outlined the insights that would influence how people would interact with the new process we were building.

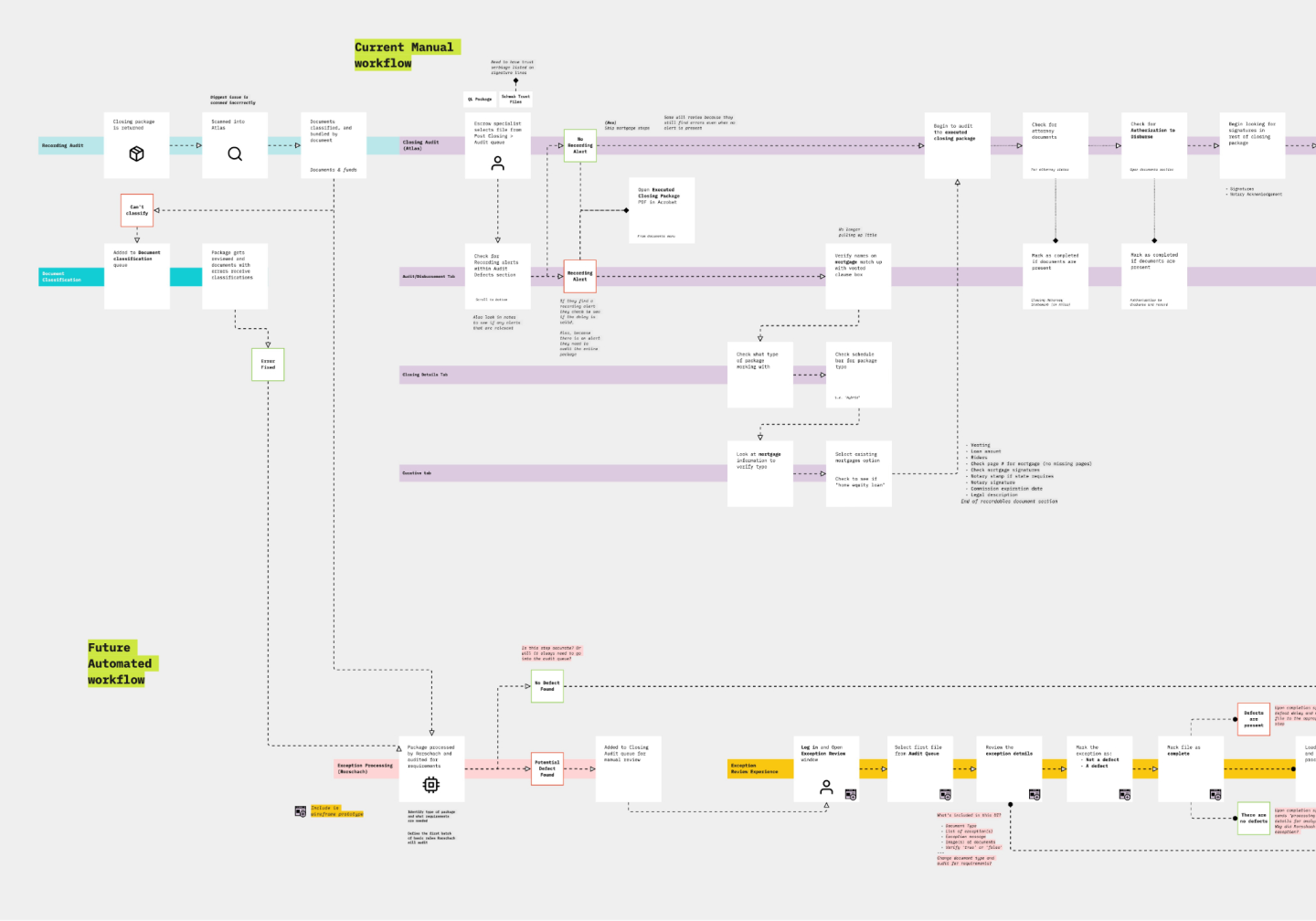

Based on the observations made during our shadowing sessions we modeled the complete manual process as it currently existed.

We then modeled the proposed new process to highlight the reduction in steps and define where human interaction would come into play.

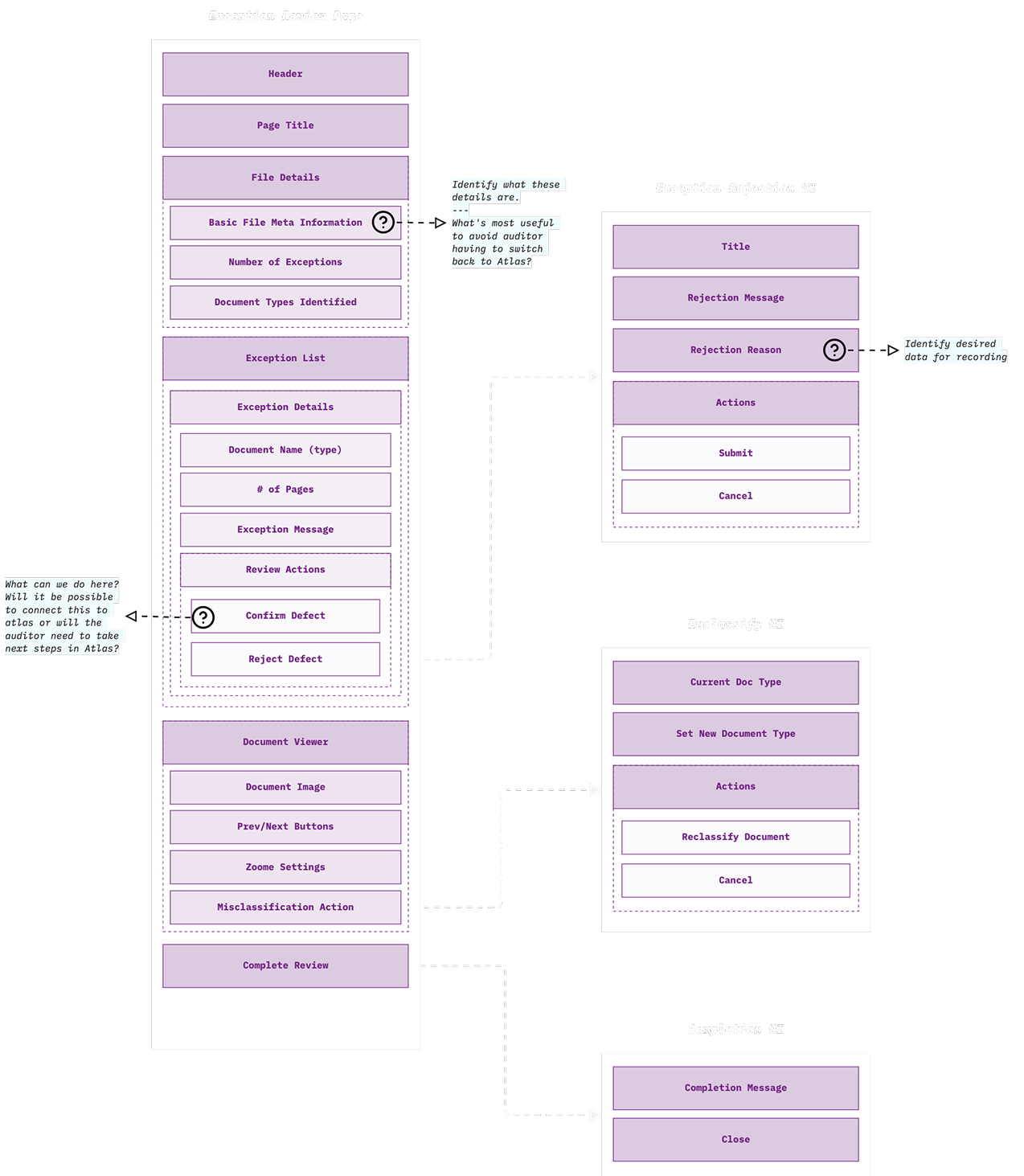

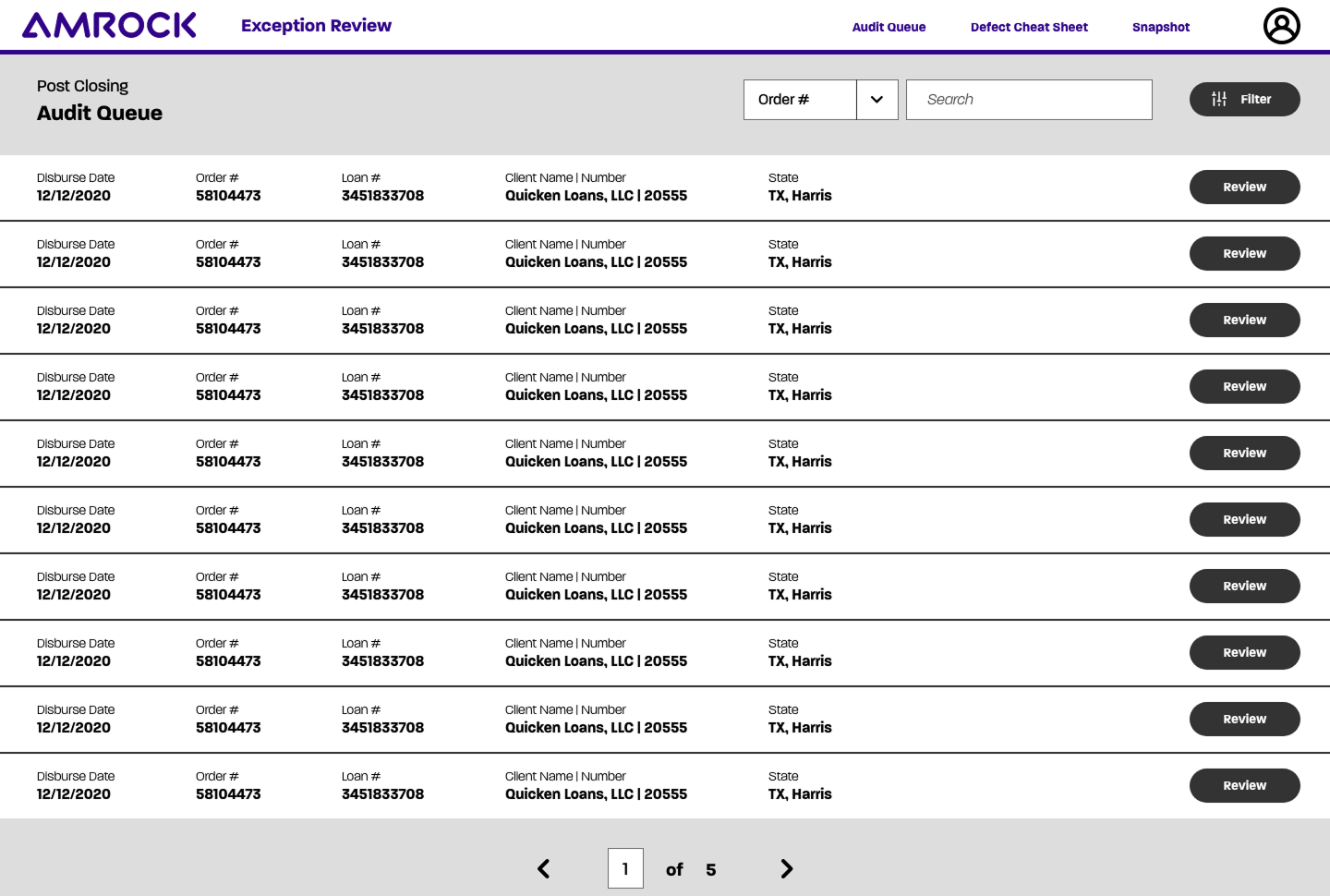

After confirming alignment across the team we created priority guides to outline the priority of content within the experience and define the details for the neccessary interactions we would offer to people.

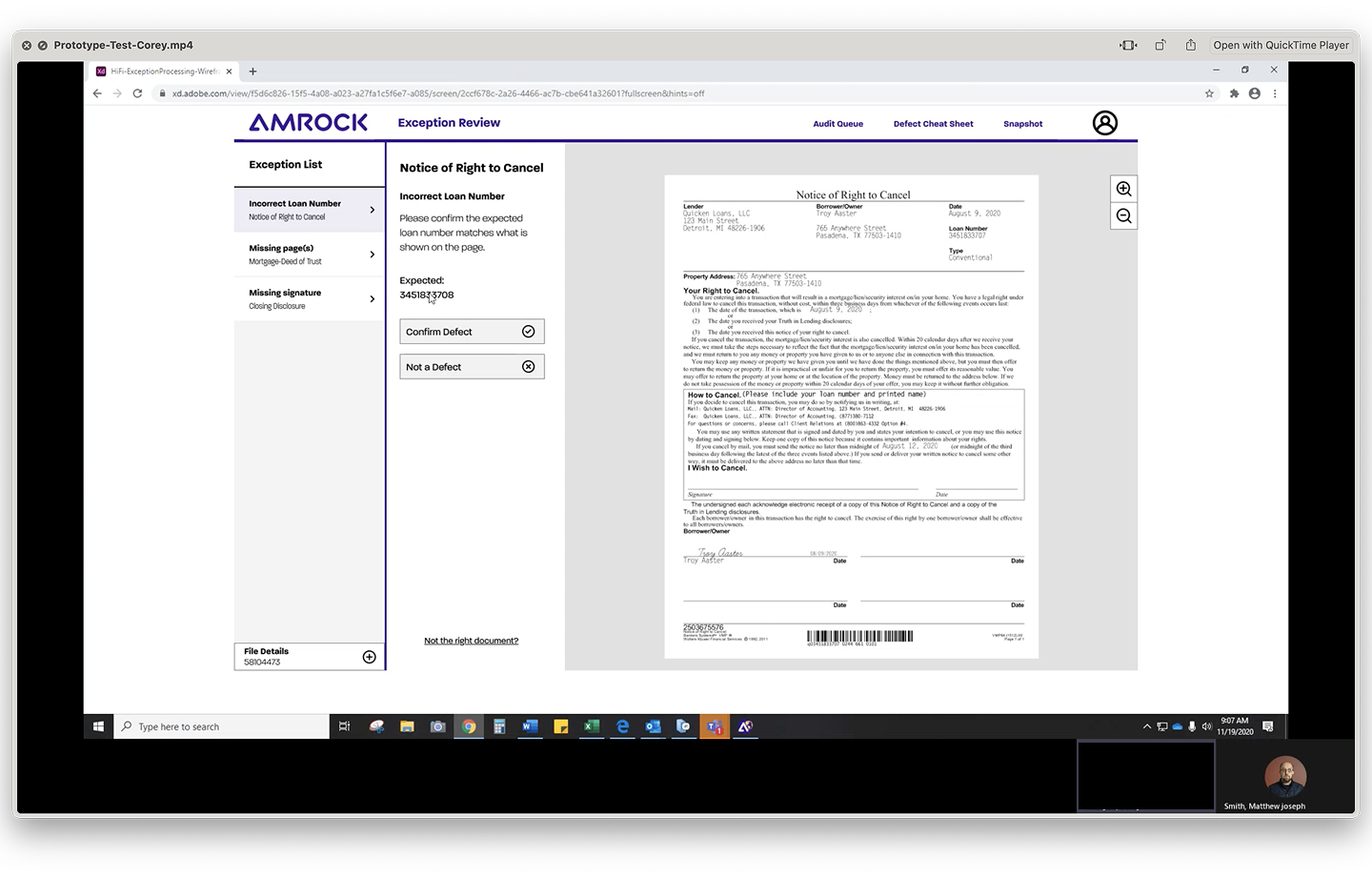

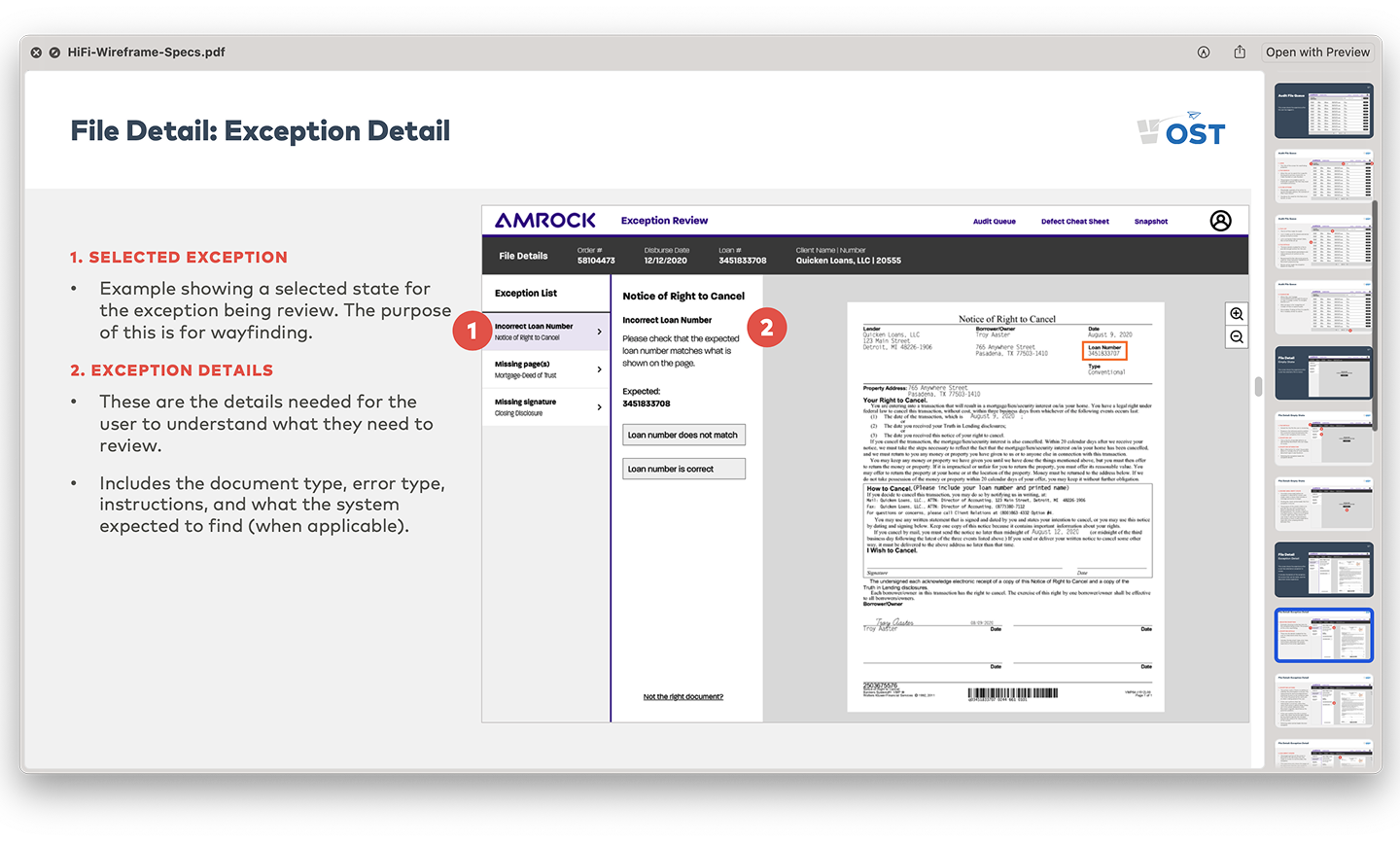

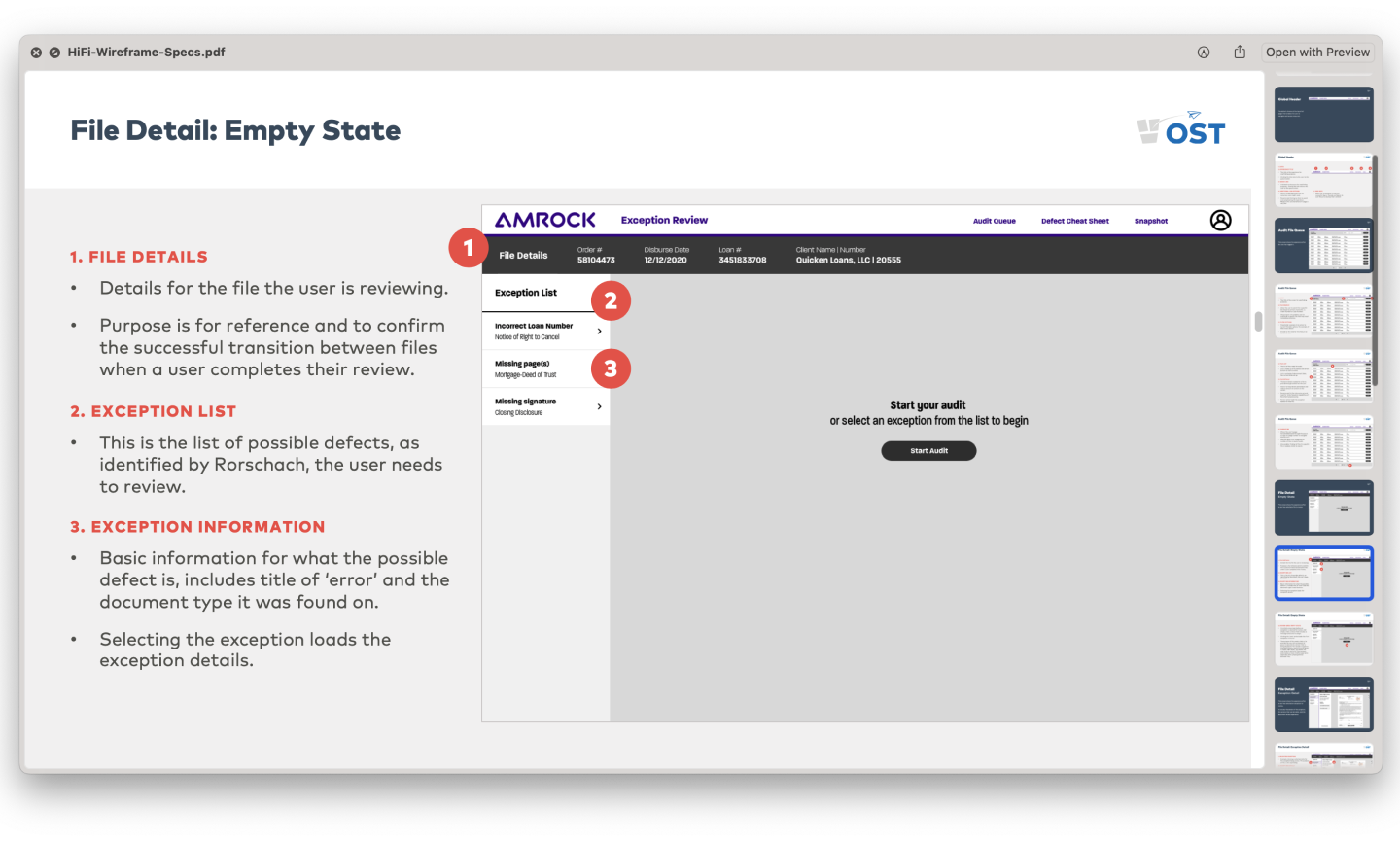

The first thing we did was build out high fidelity set of wireframes to focus on the content—to make sure it was clear—and test our assumptions for the primary actions being offered to the people using the software.

We then returned to our group of auditors to test our assumptions and surface any unexpected gaps.

Testing with auditors enabled us to identify changes to the experience to make the interactions more clear.

- Updated copywriting to be plain language wording that is specific to each exception

- Added highlight outline to mark where the exception occurred.

- Removed pop-ups for assigning a delay or adding a reason for a rejection

Before handing off the aligned on direction, to the development team to implement the high fidelity UI using the developed design system, we socialized the experience with the engineering team to showcase the desired interactions and confirm the architecture supported what we were recommending. Or if not, identify changes that need to be made to support the experience.